Reports from organisations across the globe, including one last week from the UN Climate Agency, carry stark warnings of the deteriorating state of the Earth’s climate. These are inherently statistical observations, and here Alec Erskine of the RSS Climate Change and Net Zero Task Force unpacks some of the difficulties of making such estimates.

Guest blog by Alec Erskine.

Estimating climate change is not easy. There’s a huge amount of literature on the subject and a fair amount of disagreement on the detail - though pretty much everybody says we are getting close to 1.5 degrees. The RSS’s explainer is

here.

But what does 1.5 degrees actually mean?

A recent paper, still under development, goes into these questions in commendable detail (

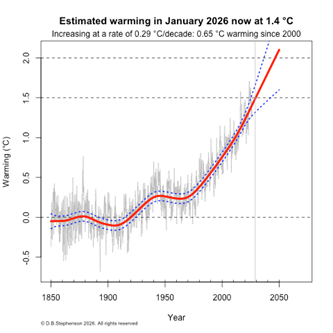

ESSDD - How well can we quantify when 1.5 °C of global warming has been exceeded?). With its 68 authors, this paper confirms that it takes a lot of scientists. That’s sixty eight – not a misprint. In fact, the answer is 1.40 degrees in 2024 (with 1.34 degrees of this attributed to human influence). The 95%ile confidence limits on this were 1.23 to 1.58. Getting to this estimate involved a lot of serious analysis.

It was agreed in Paris in 2015 that the long-term increase in global temperature should be kept below 2 degrees above pre-industrial levels but the definition was left with some ambiguity. (The choice of 2 degrees is also somewhat difficult to explain – why not 2.1 or 1.9 – but we’ll leave that for another day.)

The main issues of contention are:

- Where are we starting?

- How do you average the spatial data across the world?

- What statistical method do we use to get the long term line?

- Are we removing any ‘natural’ change that is not to do with human activity?

There is a general consensus that we are starting at the average of 1850 to 1900. This is not before all industrial activity, but the dataset becomes weaker as we go earlier, so it is a good baseline.

Averaging temperature data across the world is complicated. Measurement locations are constantly changing, and sometimes the height above ground is important. Interpolation between measurements can be done by different methods. In the UK, we tend to favour a dataset called

HADCRUT5, though there are alternatives which produce slightly different time series.

The statistical methods available are surprisingly complicated. A simple linear interpolation using old fashioned least squares (as in Excel) is one of the options, but we have to decide where to start it if we do this and it does not capture a curving change. The paper above uses a weighted average of seven methods, some of which are purely based on the monthly time series, but others use other data. One of these is shown to the right.

We generally just talk about the overall change, but it is useful and important to try and separate out the bit we have no control over – the rest is the anthropogenic, caused by us. A recent paper by

Foster & Rahmstorf (2026) analysed the curve of the change and concluded that the anthropogenic rate has increased, with statistical significance, at the beginning of 2014 from 0.25 degrees per decade to 0.34 degrees (using the HadCRUT5 dataset). The red curve in the graph above shows a similar steepening.

The issue of whether it is steepening or not is tricky, as is splitting the rise into its natural and anthropogenic components, but one thing all 68 authors are agreed on, is that the climate is changing and we will be at 1.5 degrees very soon.